A new paper, led by LIV.INNO PhD student Qiyuan Xu, has just been published in Physical Review Accelerators and Beams. The paper presents a synthetic-data-driven machine-learning approach to beam imaging for radiation-constrained accelerator environments.

The paper explores how a standard camera can be moved away from a high-radiation area by relaying the optical signal through a multimode fiber, then reconstructing the original transverse beam distribution from the scrambled output pattern. The work addresses an important practical challenge in beam instrumentation: cameras and related electronics are likely to degrade in high-radiation areas and this makes reliable imaging in accelerator facilities difficult.

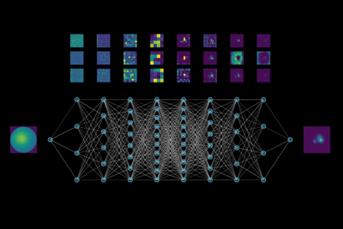

Qiyuan and collaborators from the University of Liverpool and CERN investigate how light from a beam-imaging screen is transported through a single large-core multimode fiber to a shielded location, where a conventional CMOS camera can operate safely. The difficulty in such a setup is that transmission through the fiber produces a complex speckle pattern rather than a directly usable image. Recovering the original beam image therefore becomes an inverse problem that is well-suited to machine learning. In practice, however, training such models requires large amounts of paired data, and this is difficult to obtain under real beam conditions as beam time is limited and experimental datasets often do not cover enough variation.

To address this, the team developed a training workflow based entirely on synthetic data generated using a Stochastic Gaussian Mixture model. This was used to create a large and diverse set of transverse beam distributions for training. The samples were displayed on a laser-illuminated digital micromirror device in a laboratory setup and transmitted through the same 5 m multimode fiber used in the optical tests. A custom convolutional autoencoder was then trained exclusively on the synthetic data, while real beam images from CERN’s CLEAR facility, replayed through the same setup, were reserved for evaluation.

Qiyuan said: “Our results show that the method can successfully recover the main structure of real beam images transmitted through the fiber, including beams with different sizes, positions, and more complex shapes. We also found that the synthetic dataset helped reduce issues linked to imbalance in the real data, suggesting that carefully designed simulated data can provide a practical training route when experimental calibration data are limited.”

This work is currently a proof-of-concept study, but it points toward a feasible route for more radiation-tolerant and remote beam imaging systems. Development will now focus on reducing the gap between laboratory light sources and real scintillation-screen emission. This will validate the approach further on real beam data and allow studying long-term stability under environmental perturbations such as temperature changes, vibration, and accumulated radiation dose. Together, these steps could help move the method closer to practical use on real beamlines.

Further information:

Q. Xu, H. Zhang, G. Trad, A.D. Hill, F. Roncarolo, and C.P. Welsch, Development of radiation-tolerant beam imaging via multimode fiber and synthetic data-driven machine learning, Phys. Rev. Accel. Beams (2026).